Responsible Data Collection: Consent and Compliance (Practical)

This practical guide demystifies responsible data collection for AI, moving beyond abstract principles to actionable steps. You'll learn how to navigate the core pillars of ethical data practices: Lawful Basis and Consent, Transparency, Data Minimization, Security, and Accountability. The article breaks down complex regulations like GDPR and CCPA into clear requirements, providing templates and checklists for privacy notices, consent forms, and data mapping. It addresses modern challenges, from collecting data for generative AI models to using third-party datasets, emphasizing that trust built through ethical data handling is a key competitive advantage. Designed for entrepreneurs, developers, and project managers, this guide equips you to build AI solutions that are not only innovative but also respectful and compliant.

Responsible Data Collection: Consent and Compliance (Practical)

Data is the essential fuel for artificial intelligence. It's what allows a recommendation system to know your taste in movies, a chatbot to understand your questions, and a diagnostic tool to recognize patterns in medical scans. Yet, the process of gathering this data is fraught with ethical and legal pitfalls. Headlines about privacy breaches, biased algorithms, and unauthorized data scraping have made the public—and regulators—rightfully wary.

For anyone building or using AI, this means that how you collect data is just as important as what you do with it. Responsible data collection isn't a bureaucratic hurdle; it's the foundation of user trust, product integrity, and legal sustainability. A model built on poorly sourced, unconsented, or messy data is a liability waiting to happen, prone to inaccuracy, bias, and public backlash[citation:1].

This guide moves beyond abstract principles to provide a practical, step-by-step framework for collecting data responsibly. Whether you're a startup founder training your first model, a developer scraping public information for a project, or a manager overseeing an AI initiative, these practices will help you navigate the complex landscape of consent, compliance, and ethics.

Why “Responsible” Data Collection is Non-Negotiable

The journey toward responsible AI begins at the very first step: data acquisition. The old adage "garbage in, garbage out" has never been more relevant. Feeding an AI system data that is collected unethically, illegally, or carelessly corrupts every output it generates.

First, there is a direct business risk. Laws like the European Union's General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and a growing number of similar regulations worldwide impose significant financial penalties for non-compliance—often in the millions of dollars or a percentage of global revenue. Beyond fines, the reputational damage from a privacy scandal can be catastrophic, eroding hard-won customer trust in an instant.

Second, unethical data collection perpetuates and amplifies bias. If your training data over-represents one demographic group or contains historical prejudices, your AI will learn and replicate those biases[citation:1]. This can lead to discriminatory outcomes in critical areas like hiring, lending, and healthcare. Researchers have demonstrated that AI systems can exhibit unfair discrimination based on gender, culture, or religion, often because the underlying data was flawed[citation:6]. Starting with clean, representative, and ethically sourced data is the most effective way to mitigate this risk downstream.

Finally, there is an ethical imperative. Data often represents real people—their behaviors, identities, and personal lives. Treating this data with respect is a fundamental responsibility. As AI becomes more integrated into sensitive domains like mental health support, the stakes are incredibly high. Studies have shown that AI chatbots can violate core ethical standards, in part due to how they are trained and prompted with user data[citation:6]. Responsible collection is the first commitment to doing no harm.

In essence, responsible data collection transforms data from a potential liability into a strategic asset built on a foundation of trust.

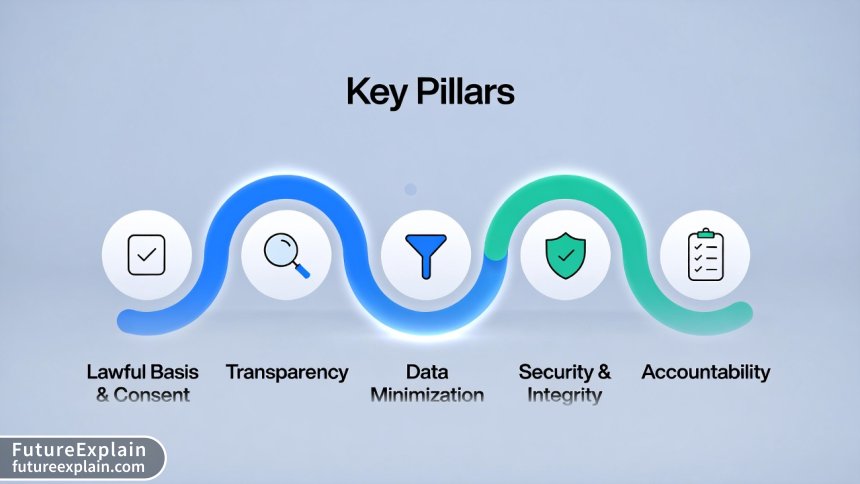

The Five Pillars of Responsible Data Collection: A Practical Framework

To navigate this complex terrain, you can build your strategy around five core pillars. Think of these not as a one-time checklist, but as integrated principles guiding every decision you make about data.

1. Lawful Basis and Informed Consent

This is the cornerstone. You must have a legally valid reason to process someone's personal data. Under regulations like GDPR, there are several possible "lawful bases," but for most AI training scenarios involving personal data, explicit, informed consent is the gold standard and often the safest route.

What does "informed consent" actually mean in practice? It's more than a pre-ticked box buried in a lengthy Terms of Service agreement. For consent to be valid, it must be:

- Freely Given: The user must have a genuine choice. You cannot deny core service functionality if a user refuses consent for non-essential data processing (like model training).

- Specific: Consent must be sought for each distinct purpose. A blanket consent for "improving our services" is too vague. You need separate consent for "training our AI recommendation algorithm."

- Informed: The user must know what they are agreeing to. This leads directly to our next pillar, Transparency.

- Unambiguous: It must involve a clear affirmative action—a click, a signature, a verbal "yes." Silence or inactivity does not count.

- Easy to Withdraw: Users must be able to revoke their consent as easily as they gave it, and you must stop processing their data upon withdrawal.

Practical Step: Design your consent flows with granular options. Instead of one "I Agree" button, offer: "Yes, sign me up for the newsletter," "Yes, I allow my usage data to improve the app's core features," and "Yes, I agree for my anonymized data to be used to train future AI models." This respects user autonomy and provides the specificity regulators demand.

2. Transparency and Open Communication

You cannot consent to something you don't understand. Transparency is the practice of clearly communicating your data practices in language anyone can understand. This is typically fulfilled through a Privacy Notice or Privacy Policy, but that document should be the finale, not the only act.

Transparency should be layered and contextual. A user should understand the basics at the point of collection. For example, a tool that uses voice recordings to train a speech model should have a clear message: "We will use this recording solely to improve the accuracy of our voice recognition system. You can delete your recordings anytime in your account settings. Learn more about how we protect your data."

Your full privacy notice should then detail:

- What data you collect: Be precise (e.g., "text prompts," "image uploads," "interaction timestamps").

- Why you collect it (the purpose): Distinguish between purposes necessary for service delivery (e.g., "to authenticate your account") and optional purposes like AI training.

- How you use it: Will it be anonymized? Who has access? How long is it retained?

- Third-party sharing: Do you share data with cloud providers, analytics services, or research partners? Name them and state why.

- User rights: Explain how users can access, correct, delete, or export their data, and how to withdraw consent.

Practical Step: Use icons, short videos, or interactive FAQs to explain your data use. Link to your privacy policy at every relevant touchpoint (sign-up, data upload, settings page).

Visuals Produced by AI

3. Data Minimization and Purpose Limitation

This principle is your best defense against scope creep and unnecessary risk. Data Minimization means you should only collect the data that is directly relevant and necessary to accomplish your specified purpose. Purpose Limitation means you cannot later reuse that data for a new, incompatible purpose without obtaining new consent.

Ask yourself: Do you really need to collect a user's birthdate, gender, or location to train your text-based customer support chatbot? Often, the answer is no. The more data you collect, the greater your storage costs, security burden, and potential liability in the event of a breach.

For AI training, this often points toward anonymization or pseudonymization. Can you remove or encrypt directly identifying information (names, email addresses, IDs) from the dataset before using it to train your model? This significantly reduces privacy risks. However, note that in complex datasets, re-identification is sometimes possible, so robust anonymization is a technical challenge in itself.

Practical Step: Conduct a "data minimization audit" for every form and data collection point in your product. For each field, document the exact purpose it serves. If you can't justify it, remove it.

4. Security and Integrity

Collecting data responsibly includes a duty to protect it. You must implement appropriate technical and organizational measures to guard against unauthorized access, accidental loss, or destruction. The level of security should be proportional to the sensitivity of the data.

Basic measures include:

- Encryption: Data should be encrypted both in transit (using HTTPS/TLS) and at rest.

- Access Controls: Strictly limit internal access to personal data on a need-to-know basis. Use strong authentication (like two-factor authentication) for administrative accounts.

- Regular Security Testing: Conduct vulnerability assessments and penetration tests.

- Data Integrity Checks: Ensure your data pipelines don't corrupt or inadvertently alter the source data.

Security is not just an IT issue; it's an organizational one. Do employees receive data protection training? Is there a clear incident response plan for a data breach? These are key components of responsible stewardship.

5. Accountability and Governance

This pillar is about proving you are following the rules. It requires you to document your decisions and processes. Accountability means taking responsibility for your data practices and being able to demonstrate your compliance to users and regulators.

Key practices include:

- Maintaining a Record of Processing Activities (RoPA): This is a GDPR requirement but a good practice for everyone. It's a living document that catalogs what data you collect, why, where it's stored, who you share it with, and how long you keep it.

- Conducting Data Protection Impact Assessments (DPIAs): For projects that are high-risk (e.g., using biometric data, large-scale profiling), you should formally assess the privacy risks and identify measures to mitigate them before you start.

- Implementing Privacy by Design and by Default: Bake data protection into the design of your systems and processes from the very beginning, and set the most privacy-friendly options as the default for users.

Practical Step: Start a simple RoPA using a spreadsheet or dedicated tool. Map out one of your key data flows. This exercise alone will reveal gaps and opportunities for improvement in your process.

Visuals Produced by AI

Navigating the Legal Landscape: GDPR, CCPA, and Beyond

Understanding the key regulations is crucial. Here’s a simplified overview of two major laws, but remember, local counsel is essential for compliance.

General Data Protection Regulation (GDPR)

This EU law applies to any organization that processes the personal data of individuals in the EU, regardless of where the organization is located.

Key Concepts for AI Builders:

- Personal Data: Broadly defined as any information relating to an identifiable person. This includes online identifiers (IP addresses, cookie IDs) and could encompass data points that, when combined, identify an individual.

- Special Category Data: Data revealing racial/ethnic origin, political opinions, religious beliefs, genetic data, biometric data for ID purposes, health data, or sexual orientation. Processing this data is prohibited unless you meet a specific exception (like explicit consent). Using such data for AI training requires extreme caution and robust justification.

- Data Subject Rights: GDPR grants individuals powerful rights, including the right to access their data, correct it, erase it ("the right to be forgotten"), and object to its processing. Your systems must be able to fulfill these requests.

California Consumer Privacy Act (CCPA) / California Privacy Rights Act (CPRA)

This California state law shares similarities with GDPR but has its own nuances. It applies to for-profit entities doing business in California that meet certain thresholds.

Key Concepts for AI Builders:

- Right to Opt-Out of Sale/Sharing: A core CCPA right is the ability for consumers to opt-out of the "sale" or "sharing" of their personal information. The definition of "sale" is broad and can include sharing data with a third-party AI model provider for training, even if no money changes hands.

- Right to Limit Use of Sensitive Information: The CPRA amendment adds a right for consumers to limit the use of their sensitive personal information (similar to GDPR's special category data).

- Notice at Collection: You must inform users of the categories of personal information you collect and the purposes at or before the point of collection.

The trend is clear: more jurisdictions are enacting similar laws. Brazil's LGPD, Canada's PIPEDA, and emerging laws in US states like Colorado and Virginia all follow this general paradigm of individual rights and corporate accountability.

Special Considerations for AI and Machine Learning Projects

AI projects introduce unique challenges to the data collection paradigm.

Collecting Data for Generative AI Models

Training models like large language models (LLMs) or image generators requires massive datasets. Often, this involves scraping publicly available data from the web. Is this legal? The legal landscape is evolving rapidly. Courts have generally held that training models on copyrighted works may fall under fair use considerations, but this is not a universal guarantee and is being actively litigated[citation:10]. However, using personal data scraped from the web for training is a much riskier proposition and likely violates principles of lawful basis and consent.

Best Practice: Prioritize data sources with clear licenses (e.g., Creative Commons, open data initiatives). For web scraping, implement robust filtering to exclude personal data. Consider using already-curated, licensed training datasets from reputable providers. Transparency is key—be clear in your documentation about your training data sources.

Working with Third-Party and Synthetic Data

You may purchase datasets from vendors or use synthetic data (AI-generated data designed to mimic real data).

- Third-Party Data: Conduct due diligence. Ask the vendor: What is the lawful basis for this data? Was consent obtained for the specific purpose of AI training? Can you see their privacy policy and data processing agreements? Your liability does not necessarily end with the vendor.

- Synthetic Data: This can be a powerful tool for privacy preservation, as it removes direct links to real individuals. However, if the synthetic data is generated from a model trained on personal data, biases from the original dataset can persist. Furthermore, if the synthetic data is too accurate, it may still be considered personal data under some interpretations of the law.

The Human in the Loop for Sensitive Domains

As research into AI for mental health support shows, deploying AI in high-stakes domains requires exceptional care[citation:6]. Data collection in these contexts must involve strong oversight. This means having clear ethical review protocols, ensuring data used for training is of the highest quality and sensitivity, and maintaining a "human-in-the-loop" for critical decisions. The principle of accountability is paramount here, requiring thorough documentation and governance structures that may exceed baseline legal requirements.

Building a Responsible Data Collection Workflow: A Starter Template

Let's translate these principles into a concrete action plan for a hypothetical project: training a specialized chatbot for customer support in a small business. Phase 1: Planning & Design (Before a single byte is collected)

- Define Purpose: "To train a model to answer frequently asked questions about our product's features and troubleshooting."

- Conduct a Mini-DPIA: Identify risks: Could the chatbot learn and repeat sensitive customer information accidentally shared in logs? Mitigation: Scrub logs of personal identifiers before training.

- Determine Lawful Basis: We will rely on legitimate interest for improving our core service, but we will also seek explicit consent for a more expansive training set, being transparent about the benefits.

- Design for Minimization: We will only use chat transcript text. We will automatically strip out customer names, email addresses, and order numbers.

- Craft Transparent Notices: Update the chat interface: "Conversations may be used to improve our automated support. Learn more." In the privacy policy, add a dedicated section on AI Training.

- Create a Granular Consent Flow: On the user settings page: "Help us improve: [ ] I agree to let my anonymized chat conversations be used to train our AI assistant to provide better help to future customers."

- Secure the Pipeline: Ensure all chat logs are encrypted and access is restricted to the data science team.

- Update the RoPA: Document this new processing activity: Purpose, Data Categories, Retention Period (e.g., 3 years after anonymization), Security Measures.

- Establish an Exercise Protocol: Create a simple process for how you will respond if a user exercises their right to deletion. (e.g., Find their data in source systems, delete it, and confirm the model was not trained on a subset containing only their data).

- Schedule Reviews: Set a calendar reminder to review this workflow every 12 months or whenever the project scope changes.

The Bottom Line: Trust as Your Competitive Advantage

In a world increasingly skeptical of technology's intentions, responsible data collection is no longer optional. It is a fundamental business practice for the AI era. The frameworks, laws, and principles discussed here are not just about avoiding fines—they are about building durable trust.

By being transparent, minimizing your data footprint, securing what you hold, and respecting user autonomy, you send a powerful message: you see your users as partners, not as a resource to be mined. This ethical foundation will make your AI products more robust, your brand more resilient, and your innovations more welcome in the world.

Start where you are. Map one data flow. Improve one consent notice. The journey toward responsible AI is taken one deliberate, practical step at a time.

Further Reading

Share

What's Your Reaction?

Like

15210

Like

15210

Dislike

85

Dislike

85

Love

2240

Love

2240

Funny

312

Funny

312

Angry

40

Angry

40

Sad

15

Sad

15

Wow

548

Wow

548

Clarity on "legitimate interest" vs. "consent" was worth the read alone. We were defaulting to consent for everything, which made our signup flow clunky. For some core functions, legitimate interest is more appropriate and user-friendly.

This guide, combined with the "Legal Landscape 2025" article, gave me the confidence to have a productive conversation with our lawyer. Instead of just saying "make it compliant," I could ask specific questions about lawful basis and data minimization. Huge difference.

The bit about synthetic data carrying forward bias was a lightbulb moment. We thought we'd found a clever workaround. Back to the drawing board with a more ethical approach.

Six months after first reading this, I came back to find a specific section. It's holding up as a reference. The "Five Pillars" have become part of our team's vocabulary. Thank you.

Comment #5959 and the admin reply about public APIs is a whole article in itself. That's the murky territory a lot of us are in. More on that, please!

The link to the "Open Data & Licenses" article was crucial. We're starting a new computer vision project and are now looking at curated, licensed datasets first, instead of just scraping Google Images. A total mindset shift.