Legal Landscape for AI (2025 Update): What Creators Must Know

This comprehensive 2025 guide explains the rapidly evolving legal landscape for AI creators, developers, and businesses. We cover the EU AI Act's implementation, US state and federal regulations, copyright and IP challenges for AI-generated content, privacy compliance (GDPR, CCPA), liability frameworks, and international perspectives. The guide provides practical compliance checklists, risk assessment tools, and future-proofing strategies for AI projects. Whether you're building AI applications, using AI tools commercially, or deploying AI systems, this article helps you navigate legal requirements while fostering innovation responsibly. Includes specific guidance for different AI risk categories and creator types.

Introduction: Why AI Creators Need Legal Awareness

The artificial intelligence landscape has transformed from a technological frontier to a regulated industry almost overnight. As we move through 2025, what began as voluntary ethical guidelines has evolved into comprehensive legal frameworks with real consequences for non-compliance. For creators, developers, and businesses working with AI, understanding this legal landscape is no longer optional—it's essential for sustainable innovation.

This guide provides a comprehensive overview of the current AI legal environment, focusing specifically on what creators need to know. We'll move beyond theoretical discussions to provide actionable guidance, compliance checklists, and risk assessment frameworks. Whether you're building AI applications, using AI tools commercially, or deploying AI systems, this article will help you navigate the complex intersection of technology and law.

The Global Regulatory Landscape: 2025 Update

EU AI Act: The Most Comprehensive Framework

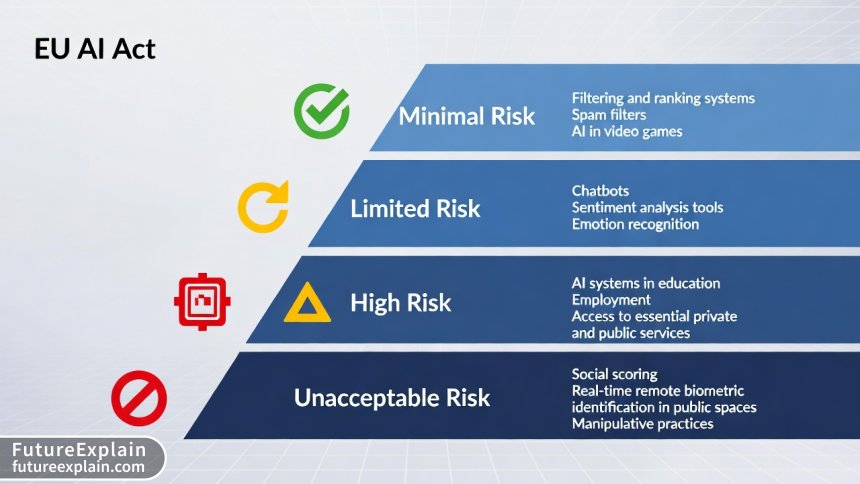

The European Union's Artificial Intelligence Act, fully implemented in 2024-2025, represents the world's most comprehensive AI regulatory framework. Unlike previous technology regulations, the EU AI Act takes a risk-based approach that categorizes AI systems into four tiers:

- Unacceptable Risk: Banned applications including social scoring by governments, real-time remote biometric identification in public spaces (with limited exceptions), and manipulative AI that exploits vulnerabilities

- High-Risk: Systems used in critical areas like healthcare, education, employment, essential services, law enforcement, and migration management

- Limited Risk: Systems with transparency requirements, such as chatbots that must disclose their AI nature

- Minimal Risk: Most consumer AI applications with voluntary codes of conduct

For creators, the most significant impact comes from the high-risk category requirements, which include:

- Robust risk assessment and mitigation systems

- High-quality training datasets to minimize bias

- Detailed documentation and logging capabilities

- Human oversight provisions

- High levels of accuracy, robustness, and cybersecurity

United States: Patchwork of State and Federal Regulations

Unlike the EU's unified approach, the United States has developed a complex patchwork of AI regulations. As of 2025, key developments include:

- Federal Algorithmic Accountability Act: Requires impact assessments for automated decision systems

- State-level regulations: California, Colorado, and Illinois have passed comprehensive AI consumer protection laws

- Sector-specific regulations: Healthcare (FDA AI/ML software framework), finance (SEC AI guidance), and employment (EEOC algorithmic discrimination guidance)

- Executive Order on AI: Establishes standards for AI safety and security, with specific requirements for developers of powerful AI systems

China's Approach: Development-Focused Regulation

China has taken a distinctive approach to AI regulation, balancing innovation promotion with social stability concerns. Key elements include:

- Algorithm Registry: Mandatory registration of recommendation algorithms

- Deep Synthesis Regulation: Strict requirements for deepfake and synthetic media

- Data Security Law: Comprehensive data protection framework affecting AI training

- Industry-specific standards: Detailed technical standards for different AI applications

Emerging Markets: Diverse Approaches

Other significant jurisdictions include:

- United Kingdom: Pro-innovation approach with sector-specific regulators taking lead

- Canada: AIDA (Artificial Intelligence and Data Act) proposal moving through Parliament

- Brazil: Marco Legal da IA framework establishing ethical principles

- Singapore: AI Verify framework for voluntary testing and certification

Copyright and Intellectual Property Challenges

The intersection of AI and copyright represents one of the most contentious and rapidly evolving areas of law. As of 2025, several key issues demand creators' attention.

Training Data: The Copyright Conundrum

Most AI models are trained on vast datasets that include copyrighted materials. The legal status of this practice varies significantly by jurisdiction:

- United States: Multiple ongoing lawsuits (Getty Images v. Stability AI, Authors Guild v. OpenAI) testing fair use boundaries for AI training

- European Union: Text and Data Mining (TDM) exceptions in Copyright Directive, but with opt-out provisions for rights holders

- Japan: Broad exceptions allowing AI training on copyrighted materials for non-commercial research

- United Kingdom: Proposed but withdrawn broad text and data mining exception

Practical Guidance for Creators:

- Maintain detailed records of training data sources and licensing

- Implement opt-out mechanisms for content creators who don't want their work used for training

- Consider synthetic data or properly licensed datasets for commercial applications

- Stay updated on major court decisions that could change the legal landscape

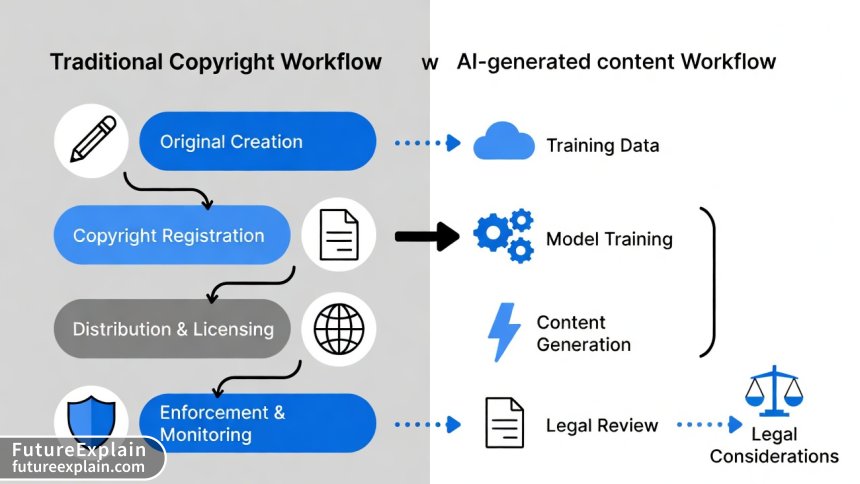

AI-Generated Content: Who Owns What?

The copyright status of AI-generated content remains unsettled in most jurisdictions:

- US Copyright Office: Maintains that works created solely by AI without human authorship cannot be copyrighted

- European Union: No specific provisions for AI-generated works, leaving uncertainty

- China and United Kingdom: Have considered or implemented sui generis protection for computer-generated works

For creators using AI tools, the key consideration is human creative contribution. Works where humans exercise significant creative control (selection, arrangement, modification) are more likely to receive copyright protection.

Licensing AI Outputs

When licensing AI-generated content, creators should:

- Clearly define the scope of permitted uses

- Include warranties regarding training data legality

- Address attribution requirements (or lack thereof)

- Consider downstream commercial rights

Privacy and Data Protection Compliance

AI systems often process personal data, triggering compliance with various privacy frameworks.

GDPR Compliance for AI Systems

The General Data Protection Regulation imposes specific requirements for AI systems processing EU residents' data:

- Lawful Basis: Must identify appropriate lawful basis for processing (consent, legitimate interest, etc.)

- Data Minimization: Collect only data necessary for specific purposes

- Purpose Limitation: Use data only for purposes specified at collection

- Automated Decision-Making: Right to human review and explanation for significant automated decisions

- Privacy by Design: Integrate data protection from the initial design phase

US Privacy Laws Affecting AI

The patchwork of US state privacy laws creates compliance challenges:

- California (CPRA): Rights to opt-out of automated decision-making technology and profiling

- Colorado and Virginia: Similar provisions with slight variations

- Health Data: HIPAA compliance for healthcare AI applications

- Children's Data: COPPA requirements for AI systems targeting users under 13

Cross-Border Data Transfers

International AI projects must navigate complex data transfer rules:

- EU-US Data Privacy Framework adequacy decision (though under legal challenge)

- Standard Contractual Clauses for other jurisdictions

- Country-specific data localization requirements (Russia, China, India)

Liability and Risk Management

Determining liability for AI system failures or harms represents a significant legal challenge.

Product Liability vs. Service Liability

The legal framework differs based on how AI is provided:

- AI as Product: Traditional product liability principles may apply for defective AI systems causing harm

- AI as Service: Contract law and negligence principles typically govern

- Hybrid Models: Many AI offerings combine elements of both, creating legal complexity

Allocation of Liability

Multiple parties may share liability for AI system outcomes:

- Developers: For defects in design or implementation

- Deployers: For improper use or failure to monitor

- Data Providers: For biased or defective training data

- End Users: For misuse or failure to follow instructions

Insurance Considerations

AI-specific insurance products have emerged to address these risks:

- Errors and Omissions (E&O) insurance for AI developers

- Cyber liability insurance covering AI-related breaches

- Product liability insurance for AI systems classified as products

Sector-Specific Regulations

Different industries face unique regulatory challenges for AI implementation.

Healthcare AI

Medical AI applications face particularly stringent regulation:

- FDA Regulation: Software as a Medical Device (SaMD) framework for AI/ML healthcare applications

- Clinical Validation: Requirements for rigorous clinical testing

- Medical Device Reporting: Obligations to report adverse events

- HIPAA Compliance: Strict health data protection requirements

Financial Services AI

AI in finance triggers multiple regulatory concerns:

- Fair Lending Laws: Prohibition of discriminatory algorithmic decision-making

- Model Risk Management: SR 11-7 requirements for model validation

- Market Manipulation: Rules against algorithmic trading that manipulates markets

- Consumer Protection: Requirements for explainability and recourse

Employment and Hiring AI

Algorithmic hiring tools face increasing scrutiny:

- EEOC Guidance: Employers can be liable for discriminatory AI hiring tools

- Local Regulations: New York City's AI hiring law requires bias audits

- Worker Monitoring: Limits on AI surveillance of employees

Practical Compliance Framework for Creators

Implementing a systematic compliance approach reduces legal risks while fostering innovation.

Step 1: Risk Assessment and Categorization

Begin by assessing your AI system against regulatory frameworks:

- Identify all jurisdictions where the system will operate

- Categorize the system under relevant frameworks (EU AI Act risk levels, etc.)

- Document intended uses and potential misuse scenarios

- Assess data protection impact if processing personal data

Step 2: Documentation and Governance

Comprehensive documentation demonstrates compliance efforts:

- Technical Documentation: System architecture, training data, performance metrics

- Compliance Records: Risk assessments, testing results, monitoring logs

- Governance Framework: Clear roles and responsibilities for compliance

- Incident Response Plan: Procedures for addressing compliance failures

Step 3: Testing and Validation

Regular testing ensures ongoing compliance:

- Bias Testing: Assess for discriminatory impacts across protected characteristics

- Security Testing: Vulnerability assessments and penetration testing

- Performance Validation: Verify accuracy claims under real-world conditions

- Adversarial Testing: Attempt to "break" or manipulate the system

Step 4: Monitoring and Maintenance

Compliance requires continuous effort:

- Performance Monitoring: Track accuracy, fairness, and other metrics over time

- Regulatory Monitoring: Stay updated on legal developments in target jurisdictions

- User Feedback: Establish channels for users to report concerns

- Periodic Review: Regular comprehensive compliance reviews

Future Legal Trends and Preparing for Change

The AI legal landscape will continue evolving rapidly. Creators should prepare for these upcoming developments.

Emerging Regulatory Areas

Several areas are likely to see increased regulation:

- Foundation Models: Specific regulations for large-scale AI models

- Synthetic Media: Enhanced requirements for deepfakes and generative AI

- AI Agents: Liability frameworks for autonomous AI systems

- Quantum AI: Emerging regulations for quantum-enhanced AI

International Harmonization Efforts

Despite current fragmentation, international coordination is increasing:

- G7 Hiroshima Process: International guiding principles for AI

- OECD AI Principles: Widely adopted international standards

- UN Initiatives: Global digital compact discussions including AI governance

Technology-Specific Regulations

Certain technologies will face targeted regulation:

- Generative AI: Content provenance, watermarking, and disclosure requirements

- Computer Vision: Enhanced privacy protections for surveillance applications

- Natural Language Processing: Content moderation and misinformation concerns

Ethical Considerations and Legal Risk Mitigation

Beyond strict legal compliance, ethical considerations impact legal risk.

Ethical AI Principles

Adopting ethical principles reduces legal exposure:

- Transparency: Clear communication about AI system capabilities and limitations

- Fairness: Proactive efforts to identify and mitigate bias

- Accountability: Clear responsibility for system outcomes

- Privacy: Respect for user data and minimization of collection

- Human Oversight: Meaningful human control over significant decisions

Stakeholder Engagement

Engaging diverse stakeholders improves legal and ethical outcomes:

- User Consultation: Understanding user concerns and expectations

- Expert Review: Involving legal, ethical, and domain experts

- Community Input: Considering broader societal impacts

Case Studies: Legal Challenges and Solutions

Case Study 1: Healthcare Diagnostic AI

A startup developing AI for medical image analysis navigated FDA regulations by implementing rigorous clinical validation, comprehensive documentation, and post-market surveillance. Their proactive approach to compliance facilitated faster regulatory approval and reduced liability exposure.

Case Study 2: Recruitment AI Platform

An AI-powered hiring platform faced discrimination claims but successfully defended itself by demonstrating comprehensive bias testing, transparent candidate communication, and human oversight mechanisms. Their documented compliance efforts proved crucial in legal proceedings.

Case Study 3: Generative AI Content Creation Tool

A generative AI tool for marketers addressed copyright concerns by implementing robust content filtering, opt-out mechanisms for content creators, and clear user guidelines about copyright responsibility. This multi-layered approach reduced legal risks while maintaining functionality.

Resources and Tools for AI Legal Compliance

Several resources can assist creators with legal compliance:

Documentation Templates

- EU AI Act technical documentation template

- AI impact assessment framework

- Model cards template for responsible AI documentation

Testing Tools

- Bias testing frameworks (AI Fairness 360, Fairlearn)

- Adversarial testing tools

- Privacy impact assessment tools

Professional Support

- AI-specialized legal counsel

- Ethical AI consultants

- Compliance automation platforms

Conclusion: Balancing Innovation and Compliance

The AI legal landscape in 2025 represents both challenge and opportunity for creators. While compliance requirements add complexity, they also establish guardrails that foster responsible innovation and build user trust. The most successful creators will view legal compliance not as a barrier but as a competitive advantage—differentiating themselves through transparency, fairness, and accountability.

As regulations continue evolving, the key to sustainable AI development lies in proactive compliance, continuous learning, and ethical commitment. By understanding the legal framework, implementing systematic compliance processes, and staying informed about developments, creators can navigate this complex landscape while pushing the boundaries of what AI can achieve.

Disclaimer: This article provides general information about AI legal considerations and is not legal advice. Regulations vary by jurisdiction and change frequently. Consult qualified legal counsel for advice specific to your situation.

Further Reading

- Ethical AI Explained: Why Fairness and Bias Matter

- Legal Landscape: AI Regulation Overview (2024 Update)

- Responsible Data Collection: Consent and Compliance (Practical)

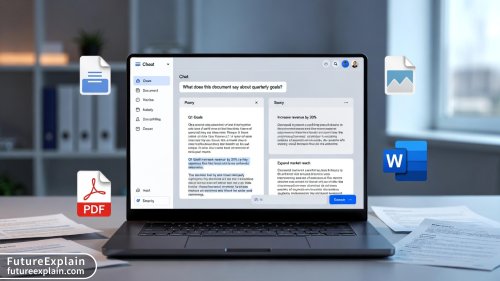

Visuals Produced by AI

Share

What's Your Reaction?

Like

842

Like

842

Dislike

14

Dislike

14

Love

210

Love

210

Funny

70

Funny

70

Angry

42

Angry

42

Sad

28

Sad

28

Wow

168

Wow

168

Follow-up question: For AI systems that evolve with user feedback (continuous learning), how do compliance documentation requirements work? Do we need to update technical docs every time the model changes?

Great question Jack. For continuously learning systems: Major changes trigger new assessment, minor changes require documentation updates. We recommend quarterly documentation reviews for evolving systems.

As a content creator using AI tools, the copyright ownership confusion is frustrating. I spend hours editing AI outputs but still worry about copyright status. Clearer laws would help creators tremendously.

The synthetic data mention is key. We've switched to synthetic training data for our customer service AI. It eliminated copyright concerns but introduced new validation challenges.

The international perspective is valuable but overwhelming. We're a 5-person team selling globally. Complying with EU, US, China, and Brazil regulations simultaneously seems impossible for small teams.

Charles, this is a common challenge. Start with prioritizing markets by revenue and using the highest standard as baseline. We're planning a 'small team AI compliance' guide specifically.

Creating educational AI tools here. The Children's Data section mentioning COPPA saved us. We were collecting birth dates 'for personalized learning' which would have been a compliance disaster.

The insurance section is crucial but underdeveloped. We've been shopping for AI liability insurance and found massive premium differences based on risk category. High-risk AI systems can be 10x more expensive.