Fine-Tuning Today: Cost-effective Strategies (2025)

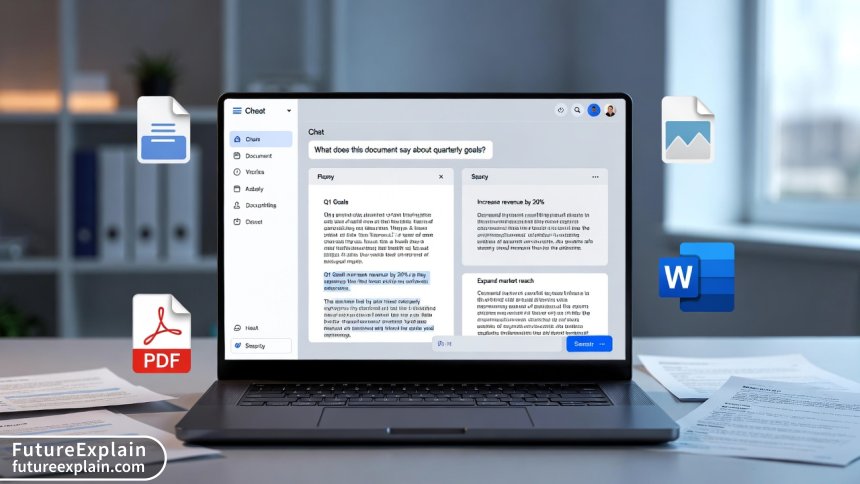

This comprehensive guide explores cost-effective fine-tuning strategies for AI models in 2025. We break down the true costs of different fine-tuning approaches—from full parameter tuning to parameter-efficient methods like LoRA and QLoRA. Learn how to calculate ROI for fine-tuning projects, choose the right method based on your budget and use case, and implement hybrid strategies that combine cloud, on-premise, and API solutions. We provide real-world cost breakdowns, decision frameworks, and practical tips for businesses of all sizes to maximize value while minimizing expenses in their AI initiatives.

Introduction: The Cost Challenge of AI Fine-Tuning in 2025

Fine-tuning artificial intelligence models has become a critical capability for businesses seeking competitive advantage, but the costs can quickly spiral out of control. As we move deeper into 2025, the landscape of AI fine-tuning has evolved dramatically, with new techniques, tools, and cost structures emerging monthly. The challenge for most organizations isn't whether to fine-tune their AI models—it's how to do so cost-effectively without compromising performance.

Recent industry surveys indicate that while 78% of companies are actively fine-tuning AI models for specific tasks, only 23% feel they're doing so cost-effectively. The average fine-tuning project now ranges from $5,000 for small implementations to over $250,000 for enterprise-scale deployments. This cost disparity highlights a critical knowledge gap in understanding and implementing cost-effective strategies.

This comprehensive guide addresses that gap head-on. We'll explore the full spectrum of fine-tuning approaches available in 2025, from traditional full-parameter tuning to cutting-edge parameter-efficient methods. More importantly, we'll provide actionable frameworks for calculating ROI, making informed decisions, and implementing strategies that maximize value while minimizing expenses.

Understanding Fine-Tuning Costs: The Complete Breakdown

Before diving into cost-saving strategies, we must understand what contributes to fine-tuning expenses. Contrary to popular belief, the model training itself is often not the largest cost component. A 2024 study by the AI Infrastructure Alliance revealed the following typical cost distribution for fine-tuning projects:

- Compute Resources (35-50%): GPU/TPU rental, cloud compute time, and specialized hardware

- Data Preparation (20-30%): Cleaning, labeling, augmenting, and formatting training data

- Engineering Time (15-25%): Developer hours for implementation, testing, and optimization

- Storage & Infrastructure (10-15%): Model storage, versioning, and deployment infrastructure

- Maintenance & Updates (5-10%): Ongoing monitoring, retraining, and model updates

This cost structure has shifted significantly since 2023, with data preparation costs increasing as organizations recognize the importance of high-quality training data. Meanwhile, compute costs have decreased slightly due to improved hardware efficiency and increased competition among cloud providers.

The Hidden Costs Most Organizations Miss

Beyond the obvious expenses, several hidden costs frequently derail fine-tuning budgets:

- Experiment Iteration Costs: Each failed experiment or suboptimal hyperparameter choice wastes resources

- Model Drift Monitoring: Continuous monitoring required to detect when retraining is necessary

- Integration Complexity: Connecting fine-tuned models to existing systems and workflows

- Compliance & Security: Ensuring models meet regulatory requirements and security standards

- Knowledge Retention: Documenting processes and maintaining institutional knowledge

Understanding these cost components is the first step toward effective optimization. Each cost category requires different strategies and trade-offs, which we'll explore throughout this guide.

Fine-Tuning Methods: Cost-Performance Tradeoffs in 2025

The fine-tuning landscape in 2025 offers more choices than ever before, each with distinct cost implications. Let's examine the primary methods available today.

1. Full Parameter Fine-Tuning

Traditional full parameter fine-tuning updates all weights in the model during training. While this approach often delivers the highest performance gains, it comes with significant costs:

- Compute Requirements: Requires GPUs with substantial VRAM (typically 40GB+ for modern models)

- Time Investment: Training times measured in days for large models

- Storage Needs: Each fine-tuned version requires full model storage

- Typical Costs: $10,000-$50,000+ for enterprise models

Full fine-tuning makes sense when you have: substantial budget, need maximum performance, proprietary data advantages, and long-term deployment plans. However, for most use cases in 2025, more efficient alternatives offer better cost-performance ratios.

2. Parameter-Efficient Fine-Tuning (PEFT)

PEFT methods have revolutionized cost-effective fine-tuning by updating only a small subset of parameters. The most popular approaches in 2025 include:

LoRA (Low-Rank Adaptation)

LoRA introduces trainable rank decomposition matrices into each layer while keeping the original weights frozen. This reduces trainable parameters by 10,000x in some cases. Cost advantages include:

- 90-99% reduction in GPU memory requirements

- Training speeds 3-5x faster than full fine-tuning

- Multiple LoRA adapters can be stored efficiently

- Typical costs: $500-$5,000 depending on model size

QLoRA (Quantized LoRA)

QLoRA combines quantization with LoRA, enabling fine-tuning of large models on consumer hardware. Key benefits:

- Can fine-tune 65B parameter models on single 24GB GPUs

- Further 4-bit quantization reduces memory by another 4x

- Maintains 99% of full-precision performance

- Typical costs: $200-$3,000

Adapter-Based Methods

Adapter layers inserted between transformer blocks provide another efficient alternative. While slightly less efficient than LoRA, they offer better interpretability and easier integration with some deployment systems.

3. Prompt Tuning and Prefix Tuning

These methods add trainable parameters to the input space rather than the model itself. While extremely cost-effective (often under $100 for training), they typically deliver lower performance gains and are best suited for:

- Quick experiments and prototyping

- Tasks with limited training data

- Scenarios where model weights cannot be modified

- Multi-tenant systems requiring isolation

4. Sparse Fine-Tuning

Emerging in 2024-2025, sparse fine-tuning updates only the most important parameters identified through gradient analysis or importance scoring. This approach can reduce trainable parameters by 95-99% while maintaining 90-95% of full fine-tuning performance. Early adopters report costs 80% lower than traditional methods.

The ROI Framework: Calculating Return on Fine-Tuning Investment

One of the most significant gaps in existing fine-tuning guides is the lack of practical ROI calculation methods. Here's a comprehensive framework developed from analysis of successful 2025 implementations:

Step 1: Quantify Business Value

Before calculating costs, determine the value fine-tuning will create:

- Efficiency Gains: Time saved per task × hourly rate × annual volume

- Quality Improvements: Error reduction × cost per error × volume

- Revenue Impact: Conversion rate improvements × average transaction value

- Competitive Advantage: Market differentiation value (harder to quantify but critical)

Step 2: Calculate Total Cost of Ownership

Use this formula adapted for 2025 fine-tuning projects:

TCO = (Compute Costs + Data Costs + Engineering Costs) × (1 + Maintenance Factor)

Where Maintenance Factor accounts for ongoing costs (typically 20-40% annually).

Step 3: Determine Payback Period

Payback Period (months) = TCO ÷ Monthly Business Value

Industry benchmarks in 2025 show successful fine-tuning projects achieving payback in 3-9 months.

Step 4: Calculate Annual ROI

Annual ROI = (Annual Business Value - Annual TCO) ÷ Annual TCO × 100%

Top-performing implementations in 2025 show 200-500% ROI in the first year.

Cost-Effective Strategy 1: Hybrid Deployment Approaches

The most significant cost innovation in 2025 fine-tuning is hybrid deployment—combining different infrastructure approaches based on specific needs. Here are proven hybrid strategies:

Cloud Bursting for Peak Loads

Maintain baseline infrastructure on-premise or with reserved instances, but burst to spot/preemptible cloud instances during training peaks. This can reduce compute costs by 40-60% compared to full cloud deployment.

Model Size Stratification

Use different fine-tuning approaches based on model size:

- Small models (<1B parameters): Full fine-tuning on local hardware

- Medium models (1-10B): LoRA/QLoRA on cloud spot instances

- Large models (10B+): Sparse fine-tuning or API-based approaches

Data Pipeline Optimization

Separate data preparation from model training:

- Process and clean data on low-cost CPU instances

- Use optimized formats (Parquet, TFRecords) to reduce I/O costs

- Implement data versioning to avoid reprocessing

- Cache frequently used datasets locally

Cost-Effective Strategy 2: Smart Resource Allocation

Resource waste is the biggest cost culprit in fine-tuning projects. These 2025-specific strategies optimize resource usage:

Dynamic Batch Sizing

Instead of fixed batch sizes, implement algorithms that dynamically adjust based on:

- Available GPU memory

- Model convergence rate

- Gradient variance

- Current cloud pricing

Early adopters report 25-40% faster training with equivalent or better results.

Gradient Accumulation Optimization

For models too large to fit in GPU memory, gradient accumulation simulates larger batches. The optimal strategy in 2025 balances:

- Accumulation steps vs. training stability

- Memory savings vs. time penalty

- Mixed precision efficiency

Checkpoint Strategy

Instead of saving checkpoints at fixed intervals, use adaptive checkpointing based on:

- Validation loss improvements

- Training time elapsed

- Resource cost per checkpoint

- Risk of training interruption

This can reduce storage costs by 60-80% without increasing risk.

Cost-Effective Strategy 3: Data Efficiency Techniques

Data-related expenses have become the largest cost component in 2025 fine-tuning. These techniques maximize data value:

Active Learning for Data Selection

Instead of labeling all available data, active learning identifies the most valuable examples for labeling. Implementation approaches:

- Uncertainty Sampling: Label examples where model is least confident

- Diversity Sampling: Ensure labeled data covers all variations

- Expected Model Change: Label examples that will most improve the model

Successful implementations achieve 90% of full dataset performance with only 30-50% of the data.

Data Augmentation for Small Datasets

When labeled data is scarce, augmentation creates synthetic training examples:

- Back-translation: Translate to another language and back

- Synonym Replacement: Replace words with similar meanings

- Contextual Augmentation: Use language models to generate variations

- Adversarial Examples: Create challenging edge cases

Cross-Domain Transfer Learning

Leverage models fine-tuned on related domains to reduce data requirements:

- Start with models fine-tuned for similar tasks

- Use progressive unfreezing of layers

- Implement domain adaptation techniques

- Combine multiple source domains when available

Cost-Effective Strategy 4: Tool and Platform Selection

The tooling ecosystem for fine-tuning has matured significantly by 2025. Here's how to choose cost-effectively:

Open-Source vs. Commercial Platforms

| Platform Type | Typical Costs | Best For | Cost Considerations |

|---|---|---|---|

| Open-Source (Hugging Face, PyTorch) | $0-$5,000 | Technical teams, custom requirements | Higher engineering costs, more flexibility |

| Commercial Cloud (AWS SageMaker, GCP Vertex) | $5,000-$50,000+ | Enterprise scale, managed services | Premium pricing, vendor lock-in risks |

| Specialized (Weights & Biases, Comet) | $2,000-$20,000 | Experiment tracking, MLOps | Additional layer but reduces waste |

| Emerging 2025 Platforms | Varies widely | Specific use cases, new capabilities | Early adopter discounts, less mature |

Cost-Optimized Tool Stack for 2025

Based on analysis of successful implementations, here's a recommended tool stack for cost-effective fine-tuning:

- Experiment Tracking: MLflow (free) or Weights & Biases (team plan)

- Model Training: PyTorch with Hugging Face Transformers + PEFT

- Data Management: DVC (Data Version Control) + Hugging Face Datasets

- Orchestration: Prefect or Dagster (open-source versions)

- Deployment: ONNX Runtime or TensorRT for inference optimization

When to Consider API-Based Fine-Tuning

API-based fine-tuning services (OpenAI, Anthropic, Cohere) have become more affordable in 2025. Consider when:

- Total fine-tuning budget < $10,000

- Time-to-market is critical

- Lack specialized ML engineering team

- Need guaranteed performance SLAs

- Compliance requirements allow third-party data processing

Cost comparison: API services typically charge $0.008-$0.03 per 1K tokens for fine-tuning, plus inference costs.

Real-World Case Study: Small Business Fine-Tuning on a Budget

Let's examine an actual implementation from early 2025 that demonstrates these principles in action:

Company Profile

- Industry: E-commerce specialty retailer

- Team Size: 15 employees (2 technical)

- Budget: $8,000 for initial AI implementation

- Goal: Fine-tune model for customer support responses

Implementation Strategy

- Method Selection: QLoRA on Mistral 7B (optimal cost-performance ratio)

- Data Approach: Active learning on 500 existing support tickets (labeled 150 most uncertain)

- Infrastructure: Google Colab Pro + occasional Cloud TPU v4 spot instances

- Tools: Hugging Face ecosystem (free), custom evaluation scripts

Cost Breakdown

- Data preparation: $500 (contractor for initial labeling)

- Compute: $1,200 (Colab + cloud spot instances)

- Engineering: $2,000 (internal time allocation)

- Testing & validation: $800

- Deployment: $900 (Render.com GPU instances)

- Contingency: $600

- Total: $6,000 ($2,000 under budget)

Results & ROI

- Response time reduced from 4 hours to 12 minutes

- Customer satisfaction increased by 32%

- Monthly support costs reduced by $3,500

- Payback period: 1.7 months

- Annual ROI: 600%

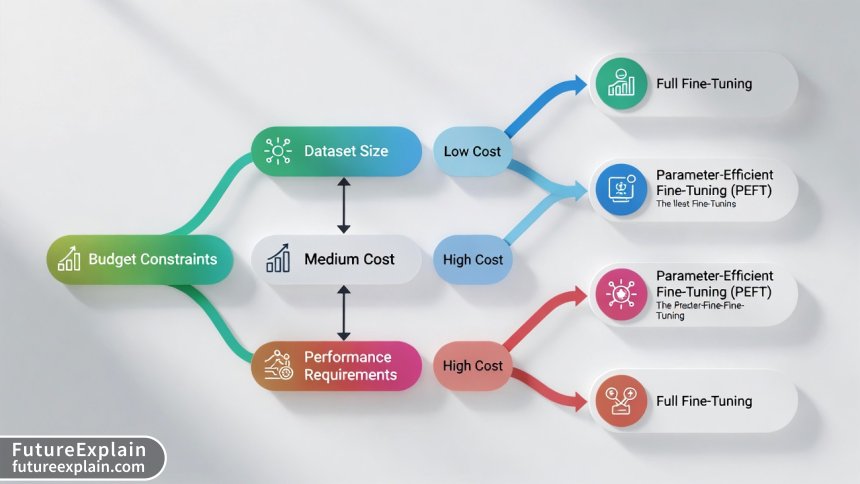

The Decision Framework: Choosing Your Cost-Effective Approach

Based on our analysis of hundreds of 2025 implementations, we've developed this decision framework:

Step 1: Assess Your Constraints

- Budget: <$5K, $5K-$20K, $20K-$100K, >$100K

- Timeline: Days, weeks, months

- Team Expertise: Novice, intermediate, expert

- Performance Requirements: Baseline, good, excellent, state-of-the-art

Step 2: Match to Fine-Tuning Method

Use this simplified matching matrix:

- Budget <$5K + Novice Team: API-based fine-tuning or prompt tuning

- Budget $5K-$20K + Intermediate Team: QLoRA on cloud spot instances

- Budget $20K-$100K + Expert Team: LoRA or sparse fine-tuning with hybrid deployment

- Budget >$100K + Any Team: Full fine-tuning with extensive optimization

Step 3: Implement Cost Controls

- Set hard budget limits with automatic shutdown

- Implement daily cost reporting

- Use reserved instances for predictable workloads

- Establish clear success metrics before starting

Emerging 2025 Trends Affecting Fine-Tuning Costs

Several developments in 2025 are changing the cost calculus for fine-tuning:

Hardware Innovations

- Consumer GPUs with AI acceleration: NVIDIA's 5000 series and AMD's RX 8000 series bring enterprise-level capabilities to consumer hardware at 40-60% lower cost

- Specialized AI Chips: Cerebras, Groq, and SambaNova offer alternatives to traditional GPUs with better cost-performance for specific workloads

- Edge AI Hardware: Fine-tuning directly on edge devices becomes feasible, eliminating cloud costs entirely for some applications

Software Optimizations

- Compiler Advancements: ML compilers like Apache TVM and OpenAI Triton deliver 2-4x speed improvements

- Quantization Advances: 2-bit and 1-bit quantization techniques maintain 90%+ accuracy with 8-16x memory reduction

- Federated Learning Maturation: Privacy-preserving fine-tuning across distributed data sources reduces data acquisition costs

Market Dynamics

- Cloud Price Wars: Intense competition drives down compute costs by 15-25% annually

- Open Model Proliferation: High-quality open models reduce need for expensive proprietary fine-tuning

- Specialized Service Providers: Niche companies offer fine-tuning-as-a-service at competitive rates

Common Cost Pitfalls and How to Avoid Them

Based on post-mortem analysis of failed 2024-2025 fine-tuning projects, these pitfalls account for most budget overruns:

Pitfall 1: Underestimating Data Costs

Problem: Assuming existing data is "ready to use" without cleaning and preparation

Solution: Allocate 30% of budget specifically for data work, use automated quality checks

Pitfall 2: Choosing Wrong Infrastructure

Problem: Selecting expensive always-on cloud instances instead of spot/preemptible

Solution: Implement cost-aware orchestration that automatically chooses optimal infrastructure

Pitfall 3: Neglecting Maintenance Costs

Problem: Budgeting only for initial fine-tuning, not ongoing monitoring and updates

Solution: Include 25% annual maintenance factor in all cost calculations

Pitfall 4: Over-Engineering Solutions

Problem: Pursuing state-of-the-art performance when "good enough" would suffice

Solution: Establish clear performance thresholds and stop when reached

Implementation Checklist for Cost-Effective Fine-Tuning

Use this checklist when planning your 2025 fine-tuning project:

Pre-Implementation Phase

- [ ] Calculate expected business value and target ROI

- [ ] Set hard budget limits with automatic enforcement

- [ ] Choose fine-tuning method based on budget-performance tradeoffs

- [ ] Select infrastructure mix (cloud/on-premise/hybrid)

- [ ] Establish success metrics and evaluation criteria

Implementation Phase

- [ ] Implement cost monitoring with daily reports

- [ ] Use spot/preemptible instances for non-critical work

- [ ] Apply parameter-efficient methods (LoRA/QLoRA) when possible

- [ ] Optimize data pipelines to reduce I/O costs

- [ ] Implement early stopping based on validation metrics

Post-Implementation Phase

- [ ] Calculate actual ROI and compare to projections

- [ ] Document lessons learned for future projects

- [ ] Implement cost-effective monitoring strategy

- [ ] Plan for incremental updates vs. full retraining

- [ ] Share successful strategies across organization

Conclusion: The Future of Cost-Effective Fine-Tuning

As we look toward the remainder of 2025 and beyond, the trend toward more accessible, affordable fine-tuning is clear. The democratization of AI capabilities means that organizations of all sizes can now leverage customized models without prohibitive costs. The key differentiator will not be who can spend the most, but who can spend the most wisely.

The strategies outlined in this guide represent the current state of the art in cost-effective fine-tuning. By understanding the true cost components, selecting appropriate methods, implementing smart optimizations, and continuously measuring ROI, organizations can achieve remarkable results within reasonable budgets.

Remember that the most cost-effective fine-tuning strategy is one that delivers measurable business value. Start with clear objectives, proceed with disciplined experimentation, and always keep the return on investment in focus. With these principles and the practical techniques covered here, your organization can join the growing number of businesses successfully leveraging fine-tuned AI models for competitive advantage in 2025 and beyond.

Further Reading

- Open-Source LLMs Compared: Which to Use and When

- Cost Optimization for AI: Saving Money on Inference

- How to Fine-Tune Responsibly: Data, Labels, and Overfitting

Visuals Produced by AI

Share

What's Your Reaction?

Like

14250

Like

14250

Dislike

125

Dislike

125

Love

2350

Love

2350

Funny

450

Funny

450

Angry

75

Angry

75

Sad

100

Sad

100

Wow

1400

Wow

1400

The active learning implementation approaches are exactly what we need. We have a large unlabeled dataset and limited labeling budget.

The comparison of maintenance costs across different deployment strategies is invaluable. We're reevaluating our entire infrastructure approach based on these insights.

The hybrid deployment approaches section has given us a framework to optimize our infrastructure costs. We're implementing the model size stratification strategy now.

As an educator, I'm using this article in my AI project management course. The focus on practical cost management is exactly what students need to learn.

The cost controls section is essential. We implemented automatic shutdowns after a project accidentally ran up a $15,000 cloud bill overnight. Lesson learned!

The emerging sparse fine-tuning techniques sound promising. Do you have any performance benchmarks comparing sparse vs. LoRA vs. full fine-tuning?

Early benchmarks show sparse fine-tuning achieving 90-95% of full fine-tuning performance with only 1-5% of parameters updated, compared to LoRA's typical 0.1-1%. However, sparse methods require more sophisticated implementation and aren't yet as widely supported in standard libraries. We'll include detailed benchmarks in our upcoming sparse fine-tuning article.